My Roles -

🎨 End-to-end Product Design

💼 Project Management

🔍 Market & User Research

For -

Tezign -

A startup building AI-empowered creative platforms

With -

Lead Product Manager, Marketing Team, Video Creation Team, Software Developers, AI Engineers

Duration -

Quick Glance 👉

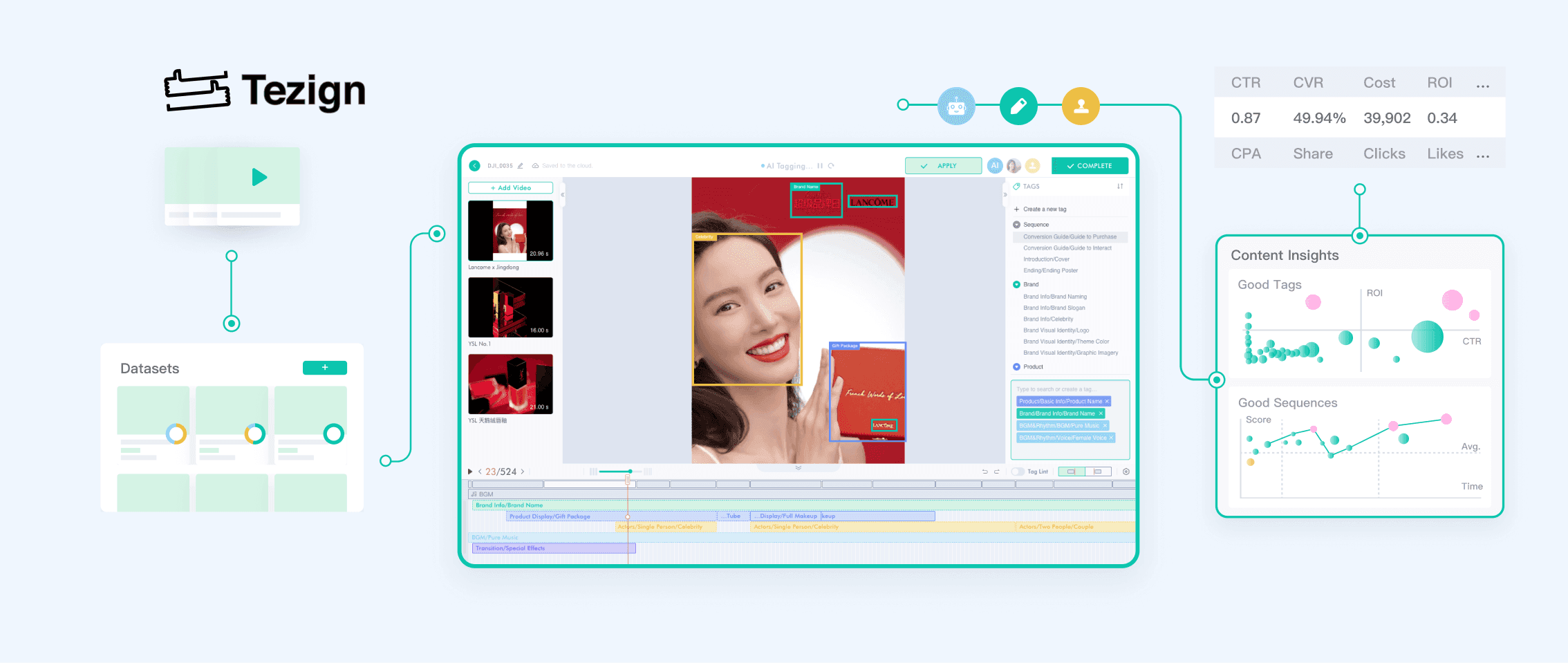

I interned as a Product Designer / Manager at Tezign, a content-tech startup creating next-gen creative content platforms that empower the content creation, optimization, and distribution.

I led the end-to-end design process of a semi-automated video annotation platform in a multi-functional team, which streamlined the original hybrid process in generating product-specific, high-granularity video annotations.

We successfully launched this platform within 3 months, resulting in an 80% reduction in video annotation time costs. Additionally, our platform played a pivotal role in validating the concept of insights-driven video marketing, garnering positive feedback from our top-tier clients like YSL China 🎉.

Key abilities:

Product 0-1

User Flow Redesign

Product Architecture

Agile Prototyping

Cross-Boundary Innovation

Background.

High-granularity video metadata crucial for high-quality video insights

Videos have emerged as potent marketing tools for top brands, yet the link between video content and its performance remains a "black box". Tezign's top-tier clients, especially the makeup branding (e.g., YSL China), were eagerly seeking comprehensive insights into marketing video performance. Presently, video insights fall short of meeting these expectations due to the lack of high-granularity video content metadata.

Problem.

Current tools fail to meet the specialized requirements for marketing video annotation

Through initial research, we've learned that current annotation platforms fall short in meeting the following two critical requirements:

Background Research.

Specialized format for marketing video annotations

Through cross-team collaboration with content strategists, marketers, and engineers, Tezign has successfully validated the feasibility of a specialized video metadata format aimed at insightful video analysis. To achieve metadata in such format, the annotation process includes temporal-level segmentation and element-level, structured tagging.

Current hybrid workflow

Through background research, I discovered that content strategists currently rely on a hybrid workflow to produce video annotations in this specialized format.

Unfortunately, this approach lacks efficiency and compromises the annotations' validity.

Two areas that we can improve:

Through discussions within the cross-functional team, I identified 2 areas that we can improve:

Interviews w/

Stakeholders.

Understand the current hybrid workflow and the gap with users’ expectations

Before diving into building a new platform, I questioned myself:

🤔 Why do users think a new annotation platform is necessary?

🤔 What is the gap between the current condition and users’ desired outcome & user experience?

Therefore, I interviewed 2 content strategists and 3 video annotators, from which I identified unmet or unsatisfactory user needs.

💡 A disorganized collaboration workflow is a significant factor contributing to unsatisfactory annotation quality and reduced efficiency.

After analyzing the current collaboration workflow, I found that content strategists and annotators currently lack an efficient collaboration method for sharing videos, updating tagging structures, reviewing annotation, and merging data.

WorkFlow Redesign #1.

Optimize the collaborative workflow to meet the desired annotation quality

I created the new workflow to streamline the collaboration process and thus ensure the format and quality of annotations. First, it empowers content strategists to directly assign tasks, share videos and tagging structures via this platform. Second, by providing real-time visibility into the ongoing annotation process, they can more effectively oversee and manage quality. Furthermore, following discussions with the team, we decided to incorporate the role of "reviewers" to assess annotations.

🤔 While quality can be better controlled with this workflow, time costs in manual annotation is still a big issue. How can we reduce the time costs in video annotation?

Annotation Process Observation.

Dig deeper into annotators’ behaviors & cognitive process for insights

To figure out how to improve the annotation efficiency and reduce manual labor, I conducted observations to understand how annotators currently work. Participants were asked to think aloud, providing insights into their cognitive processes to help identify design opportunities.

💡 Potential to leverage AI Assistance to significantly diminish cognitive effort and manual labor involved in video annotation!

After discussions with AI engineers and lead product managers regarding our research findings, we decided to integrate Tezign's developed AI techniques—such as scene segmentation, transcription, and AI tagging—into the annotation platform, to significantly diminish both the cognitive and time costs involved in annotation.

"Though integrating these AI techniques might incur initial technical costs, it's an essential and pivotal step toward establishing the next-gen AI-powered content management platform that augments content production, optimization, and distribution." - Tezign Product Lead

WorkFlow Redesign #2.

A human-AI collaborative annotation workflow to reduce manual labor and time costs

Based on findings from the observation, I then redesigned the annotation workflow, which integrated the AI assistance.

I also facilitated discussions with the development team to choose proper technical solutions, e.g., smart screen segmentation, OCR, object recognition, to actualize this concept.

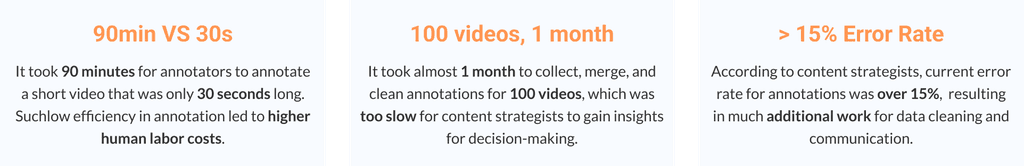

Platform IA.

Organize the information architecture for the new video content analysis platform

I was responsible for developing the 0-1 video content analysis platform. After discussing with the product management team, we prioritized the core features for the minimum viable product, including the dataset management, tag management, and the annotation tool.

I organized the Information Architecture that structured all the important features as well as detailed information and interactions. I also marked the launching phases by facilitating discussions with the multi-functional team.

Wireframing -

Core User Flow.

Illustrate the main user flow for our MVP

I was responsible for overseeing the entire platform. To ensure a smooth user flow across different features, I created the wireframes to show to main user flow that should be covered in our MVP phase.

Wireframing -

Annotation Tool.

Illustrate the layout of the annotation tool

In determining the layout of the annotation tool, I drew inspiration from various video editing tools, considering the similarities in decoding videos into different "layers" (e.g., visual, audio, text, transcripts, etc). After discussions with content strategists, we agreed to adopt a similar layout, which will lower the learning curve for users.

Major Iterations.

Considering that data and tag management already have well-established and widely-used design solutions, I concentrated primarily on the user experience design of the annotation tool. This area entails more intricate design demands, higher technical costs, and is pivotal to improving annotation quality and efficiency.

Thus, I will only talk about the iterations I made to the annotation tool in this section.

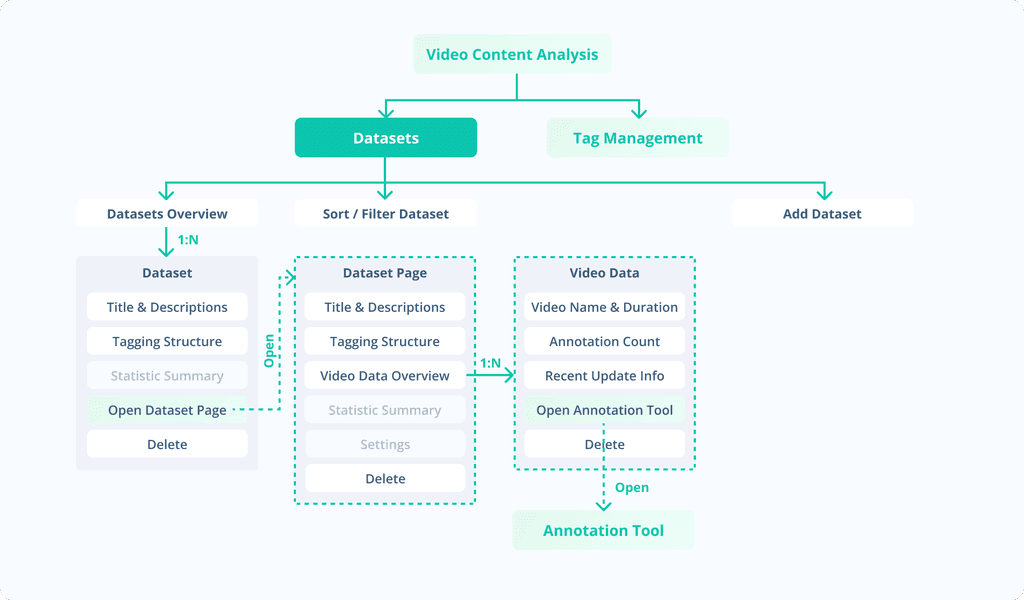

Segmentation.

Facilitate efficient and smooth segmentation

To add a temporal annotation to the video, the first step is to identify where to segment the video. Supporting smooth segmentation is vital to annotation quality and efficiency. Here are some key iterations I made through prototyping and usability testing:

Tag Selection.

Enhance clarity and efficiency in tag selection

After segmentation, annotators need to select a tag for that segment. I iterated the interaction based on the usability testing results and user feedback:

Step1. Import Data

Now: Content strategists can use the centralized platform to manage datasets and monitor the ongoing annotation process in real-time.

Before: Previously, content strategists had to share videos with annotators through shared folders, resulting in disorganized data and task management.

Step2. AI Pre-Annotation

Now: AI automates segmentations through screen transitions and transcripts, aiding annotators with precise and efficient annotation. Additionally, utilizing ML models like product, scene, and music recognition, the system automatically finishes some annotations.

Before: Previously annotators had to watch the video and manually locate the segments. The manual annotation process was very labor intensive and time-consuming.

Step3. Manual Annotation

Now: Annotators can use AI segments and shortcuts to quickly locate the segments. The fixed tag list makes it easier to search and add tags.

Before: Locating annotations or adding tags imposes significant cognitive burden and demands extensive manual effort.

🚀 Improvement in annotation efficiency and quality

The new platform reduced annotation time for a 30s video from 90min to 15min. Besides, the error rate significantly decreased from 15% to about 5%.

🫶 Video insights gained recognition from top-tier clients

We successfully used our own platform to generate 10,000+ annotations within 2 weeks after the launch of the MVP. Based on which, we pitched the video insights report for YSL China and Lancome China. The concept of video tagging for insights generation gained recognition from these top-tier clients!

What I learned.

🧠 Push myself to think from a higher level

At Tezign, being part of a young startup granted me the freedom to delve into various fields like research, design, and product management. I was fortunate to collaborate with supportive colleagues who encouraged exploration. This experience taught me the importance of a higher-level problem-solving approach—questioning the 'why' behind choosing A over B, timing considerations, aligning design approaches with business goals, and evaluating implementation ROI. Collaborating across teams enriched my perspective on these critical aspects.

💖 The beauty of building tools

I came to admire the beauty and fun in creating elegant and natural interactions for technical tools. My journey has taught me to do more than just observe users' behaviors, but also delve into the intricacies of their cognitive processes during these interactions. I've learned the importance of MVP development and rigorous usability testing in uncovering these nuances.

Additional Thoughts.

🧘♀️ Art of data, science of content

I questioned myself: Can we truly decode unstructured, creative content with semantic depth? Can data genuinely guide us in creating exceptional creative content? I am still enthusiastically exploring the realm of utilizing data and AI to comprehend creative content, extract insights, and facilitate content generation. While currently our primary assessment of video quality relies on business metrics such as ROI, Clickthrough Rate, Conversion Rate, etc, I anticipate that in the future we will have a more comprehensive approach to evaluate videos, ultimately enhancing video marketing strategies.